Blog

How we found and fixed a critical auth bypass in Cloudflare's AI-generated Next.js

And what it says about the limits of AI-generated software

Paul Sanglé-Ferrière

Mar 2, 2026

Cloudflare's pitch for viNext was straightforward: a project that would normally take months, maybe years, shipped in under a week by one engineer with about $1,100 of model spend.

AI is now very good at getting a system to the point where it looks complete.

The missing 10% is the part that actually makes infrastructure trustworthy: production behavior, security invariants, edge cases, and failure modes. That is where the engineering time usually goes. It is also where viNext still had real problems.

Similar to our OpenClaw analysis, we ran cubic against viNext for 24 hours, using thousands of agents with effectively unlimited reasoning time.

They found hundreds of bugs and security issues. One of them was a critical auth bypass in production. We fixed it and sent the patch upstream.

Interestingly, although viNext already had 1,700+ tests ported from Next.js, the bug still made it through. The follow-up discussion eventually turned into Issue #204, which exposed a deeper problem: the testing methodology itself had gaps.

That makes viNext a useful case study, not because AI failed, but because it shows where AI still needs help: not generation, verification.

The bug

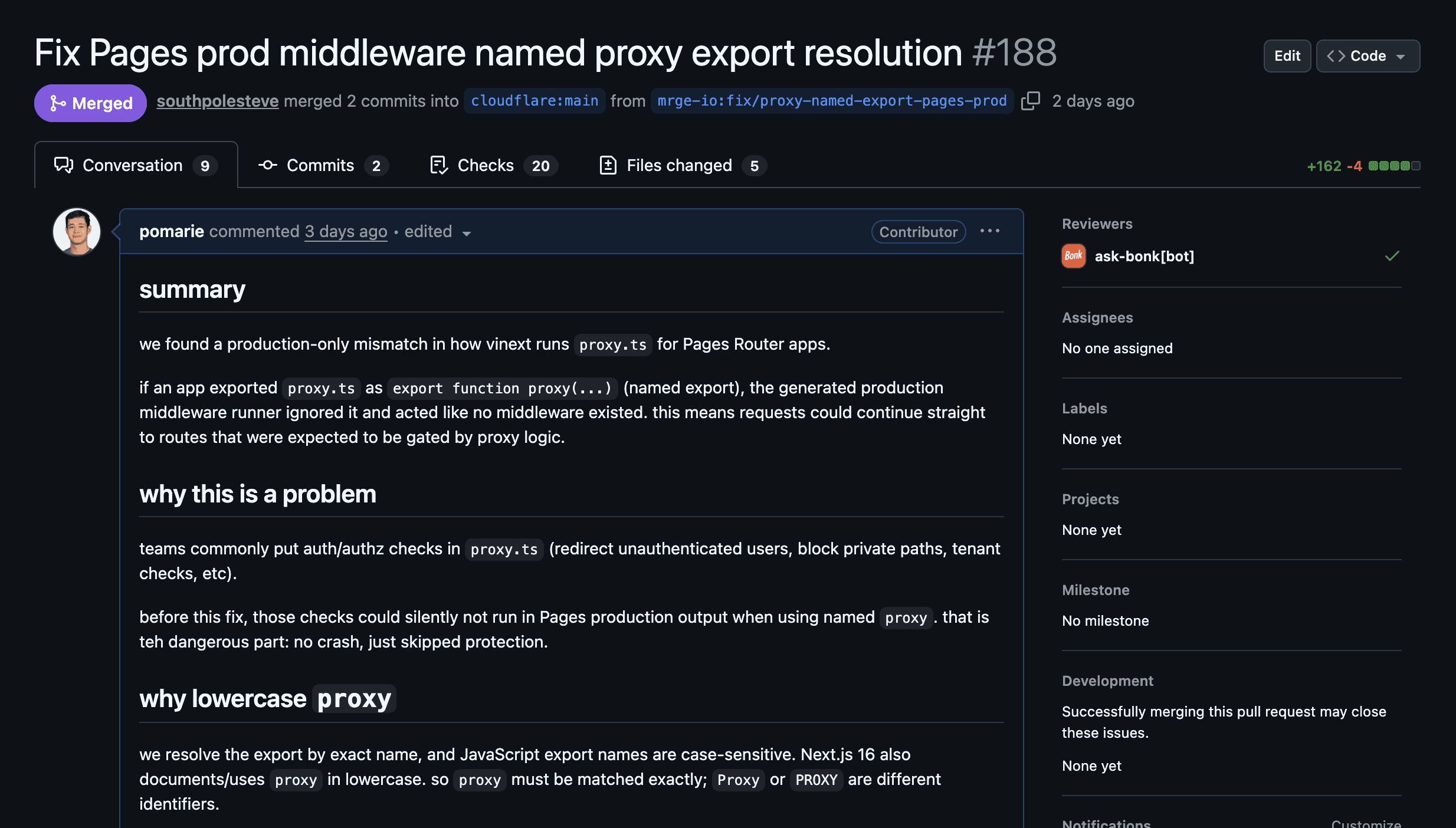

The bug was in viNext's Pages Router. In production builds, named proxy exports in proxy.ts were silently ignored.

So an application could define a proxy.ts file with a named proxy function, use it for authentication or authorization, see it work in development, and then have it disappear in production.

That is not an obscure pattern. proxy.ts is exactly where teams put request-time protection: redirect unauthenticated users to /login, block access to private routes, validate tenants, enforce headers, and so on.

The root cause was straightforward: the generated code looked for middlewareModule.default || middlewareModule.middleware but never checked for middlewareModule.proxy in the fallback chain.

Everything worked perfectly in dev, but failed silently in production builds.

We submitted the fix as PR #188, which triggered a cascade of follow-up patches (#203, #206) and eventually Issue #204, which revealed a deeper problem than the bug itself.

How we found it: cubic's codebase scans run agents specialized in finding bugs and security issues, with unlimited time and reasoning, to map out a repository and analyze every detail.

For viNext, these agents caught hundreds of issues, including this auth bypass, which we automatically fixed and submitted upstream.

1,700+ tests, and none of them caught it

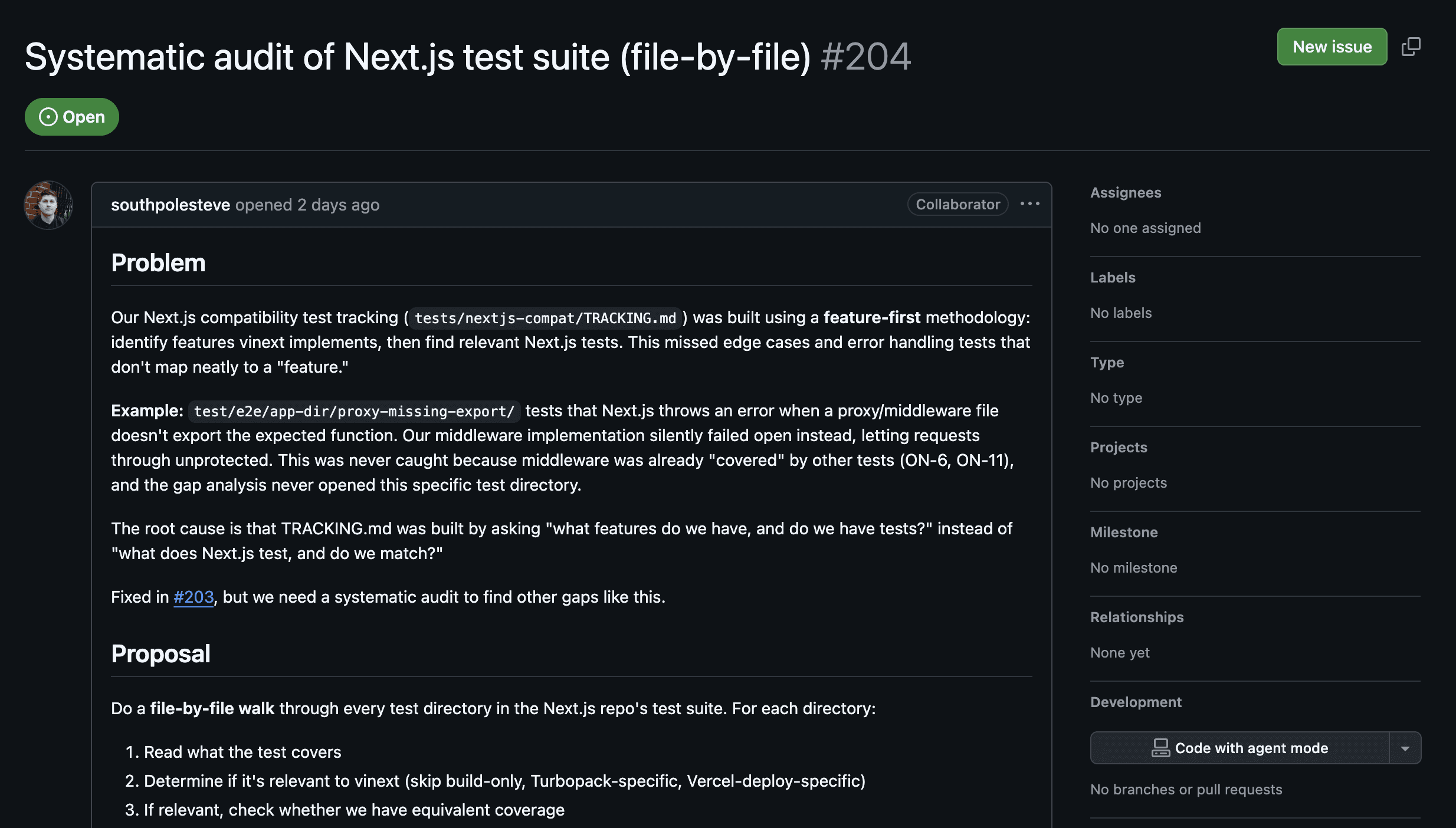

On paper, viNext looked well covered. Cloudflare had ported more than 1,700 Vitest tests and hundreds of Playwright end-to-end tests from Next.js

The problem was not the number of tests. It was the selection strategy.

The process was feature-first: decide which viNext features existed, then port the corresponding Next.js tests. That is a sensible way to move quickly. It gives you broad happy-path coverage.

But it does not guarantee that you bring over the ugly regression tests, missing-export cases, and fail-open behavior checks that mature frameworks accumulate over years.

So middleware could look "covered" while the one test that proves it fails safely never made it over.

For example, Next.js has a dedicated test directory (test/e2e/app-dir/proxy-missing-export/) that validates what happens when middleware files lack required exports. That test was never ported because middleware was already considered "covered" by other tests.

viNext now requires a file-by-file audit of 365+ Next.js test directories to find what else was missed.

It's also worth noting that the proxy export pattern is from Next.js 16, released after most LLMs' training cutoff dates, so the AI that generated viNext literally couldn't have known about it. Frameworks evolve constantly, and AI models will always be behind on the latest patterns.

Pre-existing tests are doing the heavy lifting

Every impressive "AI built X from scratch" project follows the same pattern: AI guided by a comprehensive, battle-tested, human-written test suite.

viNext used Next.js's 1,700+ tests as a machine-readable spec and rebuilt the framework in a week.

Anthropic's C compiler project ran 16 Claude agents for $20K to produce 100K lines of Rust, benchmarking against the GCC torture test suite and hitting a 99% pass rate.

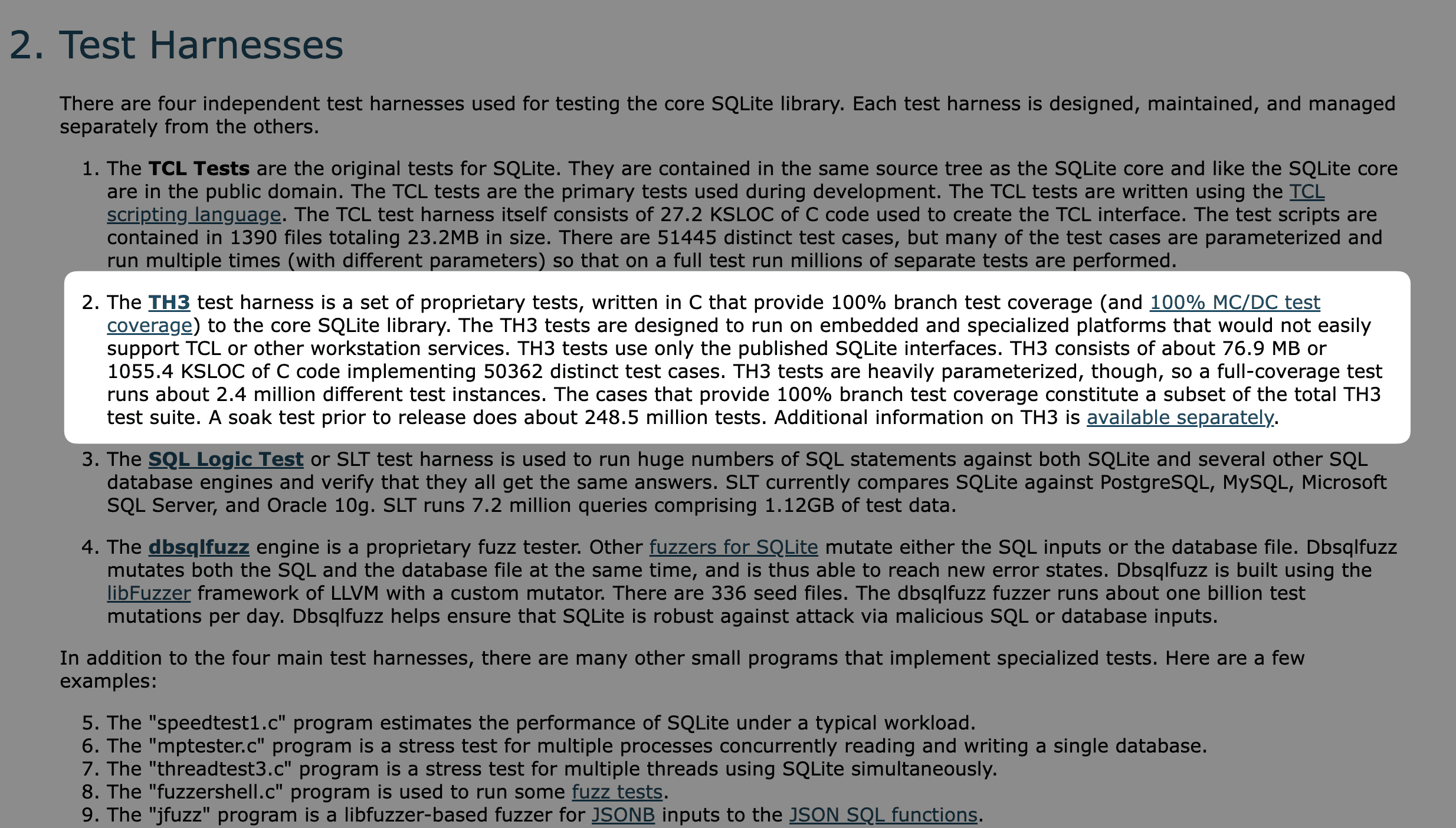

SQLite's code is public domain, but their TH3 test harness (500K+ lines, 100% branch coverage) is proprietary and requires a license. The tests are worth more than the code.

These are genuinely impressive results. But what they have in common is that the test suite existed before the AI touched anything. The tests did the heavy lifting.

Remove them and you don't get the same quality of output.

This matters because it's tempting to treat these results as proof that AI can build correct software from scratch. It can't, or at least it hasn't yet.

Passing a known spec is a fundamentally different skill than producing correct, production-safe code when no spec exists to guide you.

viNext's own auth bypass is a good example: the areas where pre-existing tests existed worked fine. The gaps where tests were missing are exactly where things broke.

Tests and deterministic quality checks are genuinely valuable for AI-assisted development. But the hard part was always writing comprehensive tests in the first place, and that hasn't changed.

What this actually means

When people cite viNext or the Anthropic compiler as evidence that "AI can build production software," they're leaving out the most important part. In every case, a comprehensive human-written test suite did the heavy lifting.

These aren't demonstrations of AI building software from scratch. They're demonstrations of AI satisfying a known spec. That's a useful capability, but it's a fundamentally different thing, and treating them as equivalent is how bad decisions get made.

AI also can't write production-ready code for critical applications by itself. Not yet. viNext shipped with hundreds of security issues and bugs. The auth bypass we found was a core security pattern silently failing in production.

cubic's codebase scans caught hundreds of issues, including this one, and we submitted the fix upstream. But even with AI assistance, getting from "works in dev" to "safe to run in production" still requires significant iteration time.

The last 10% of edge cases, security patterns, and failure modes still takes real effort.

AI generates code faster than anyone can review it manually. That's the new reality. If you want to see what's hiding in your codebase, try a scan at cubic.dev.